这一系列的文章,记录Deep Learning with Python 3rd edition这本书的学习笔记。

代码仓库:https://github.com/fchollet/deep-learning-with-python-notebooks

在线阅读:https://deeplearningwithpython.io/chapters/chapter01_what-is-deep-learning/

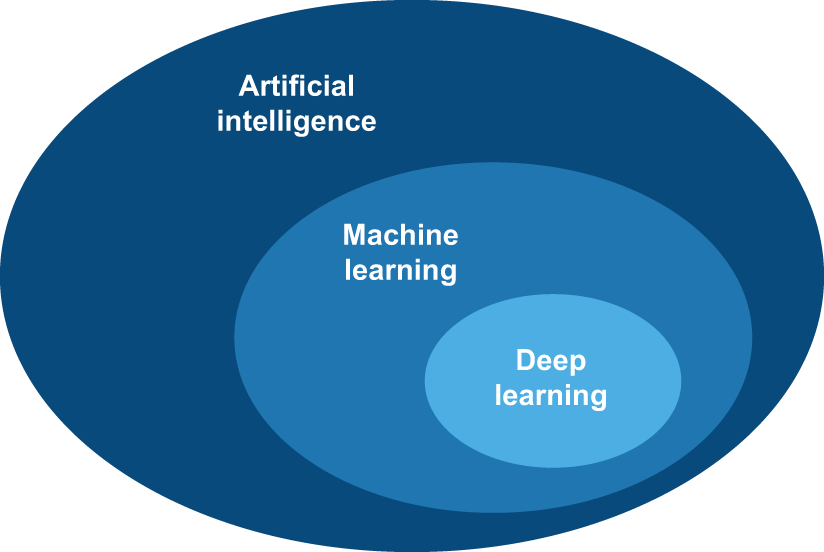

AI artificial intelligence

machine learning

deep learning

这3个是从上到下包含的关系。

人工书写规则的AI,叫做 symbolic AI

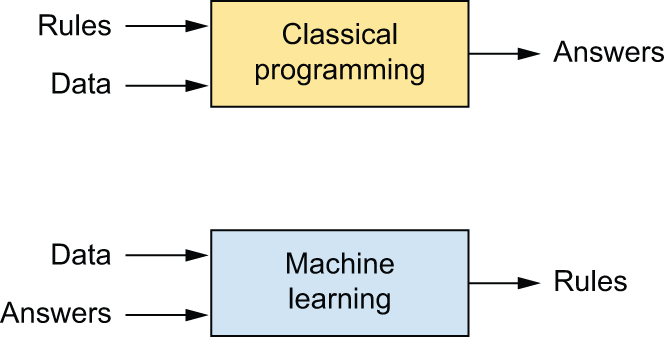

Machine Learning

它是被训练的而不是被显式编程的。

machine learning system is trained rather than explicitly programmed

Learning rules and representations from data

机器学习需要3件事情

1 input data points

2 Examples of the expected output

3 A way to measure whether the algorithm is doing a good job

用来确定算法当前输出和期待结果的距离,然后作为一个反馈信号,来调整算法的参数,达到最优解。

机器学习和深度学习的中心问题是有意义的转换数据。

meaningfully transform data: to learn useful representations of the input data at hand

representations that get us closer to the expected output.

representation

比如图片被编码为RGB格式,或者HSV格式

机器学习就是:在预定义的可能性空间内,利用反馈信号的指导,对某些输入数据搜索有用的表示和规则。

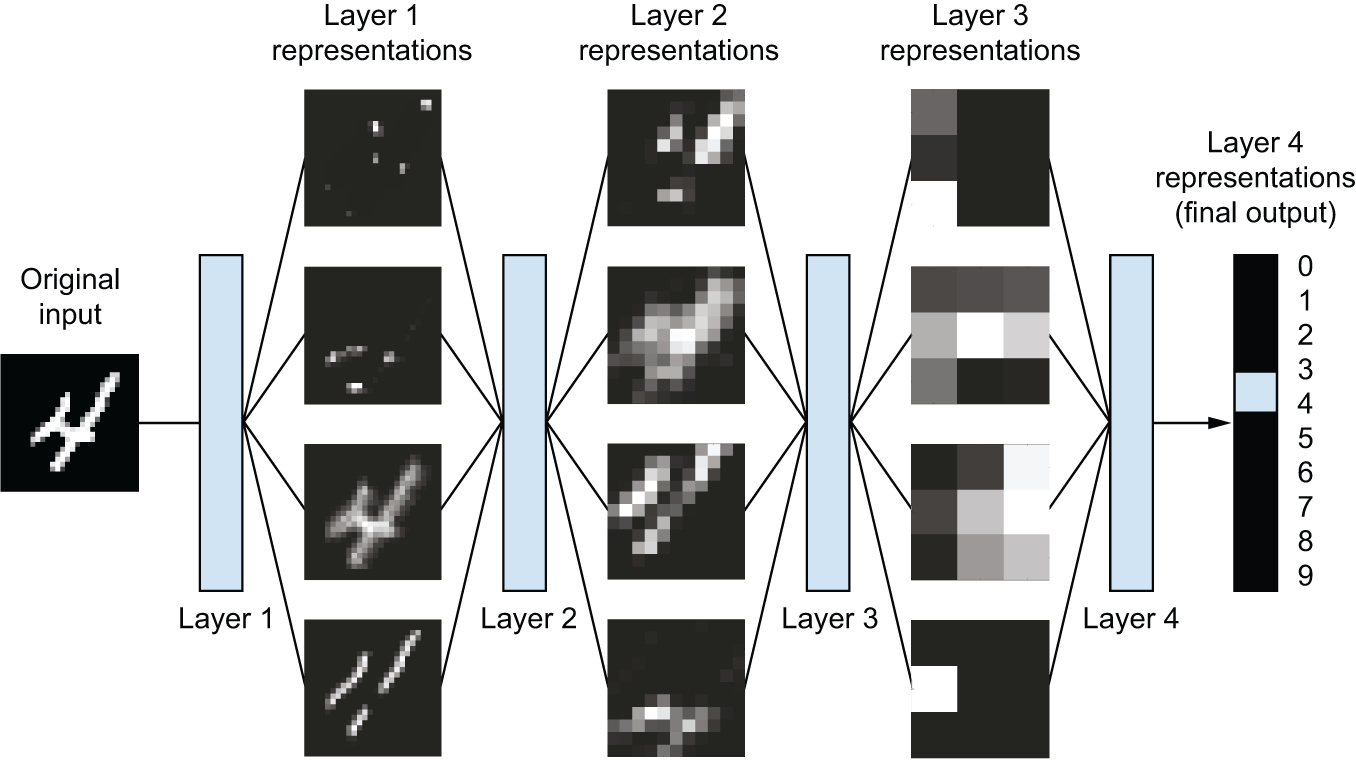

The “deep” in “deep learning”

deep不是某一种更深的理解,而是:successive layers of representations.

模型的层数,叫做depth of the model。

现代深度学习通常涉及几十或几百层。

其它只涉及1到2层的机器学习叫做:shallow learning

这些层是通过神经网络学习的

these layered representations are learned via models called neural networks,

structured in literal layers stacked on top of each other.

多阶段信息蒸馏过程,经过多层过滤器后,变的很纯净

a multistage information-distillation process

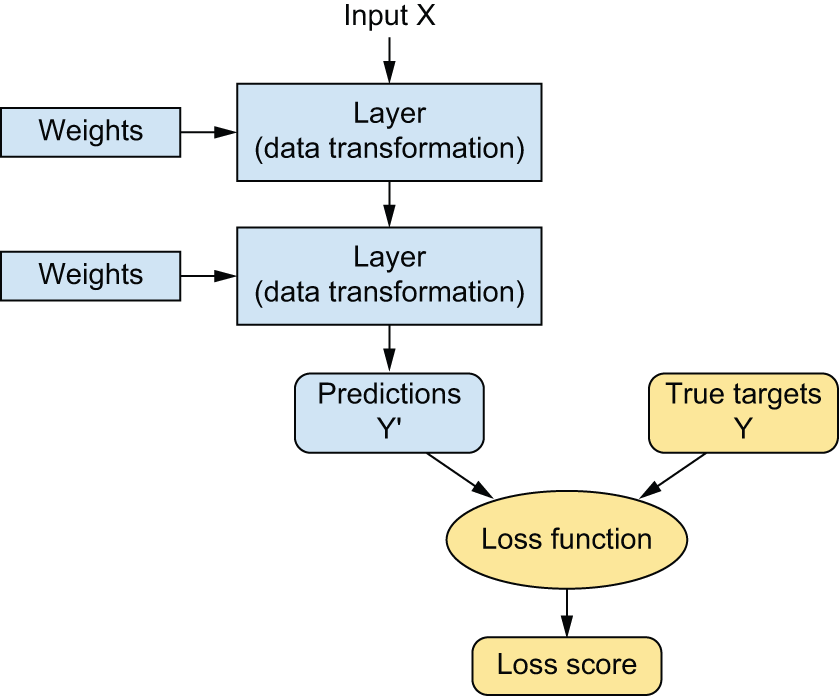

input到target的映射是deep neural networks通过a deep sequence of simple data transformations (layers)

而这些数据转换是通过接触examples学习到的。

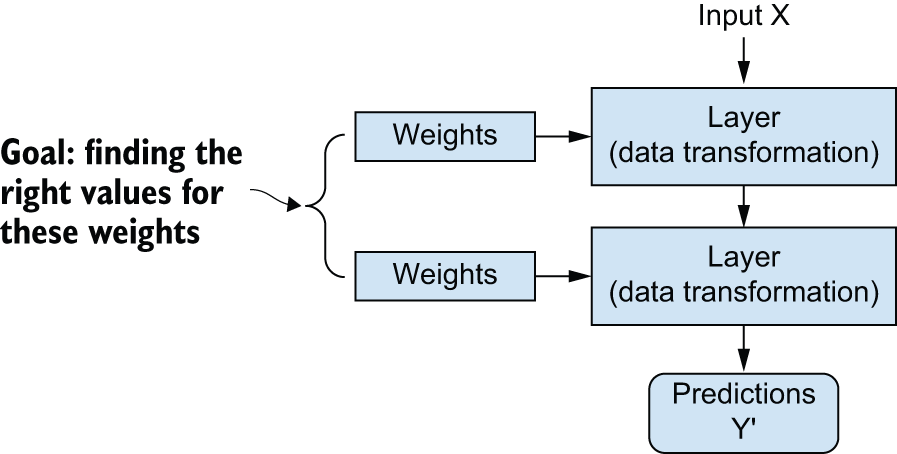

每一层的转换是通过weights参数化确定的,weights本质上是一系列数字。

transformation implemented by a layer is parameterized by its weights

A neural network is parameterized by its weights.

learning就是找到所有layers的weights

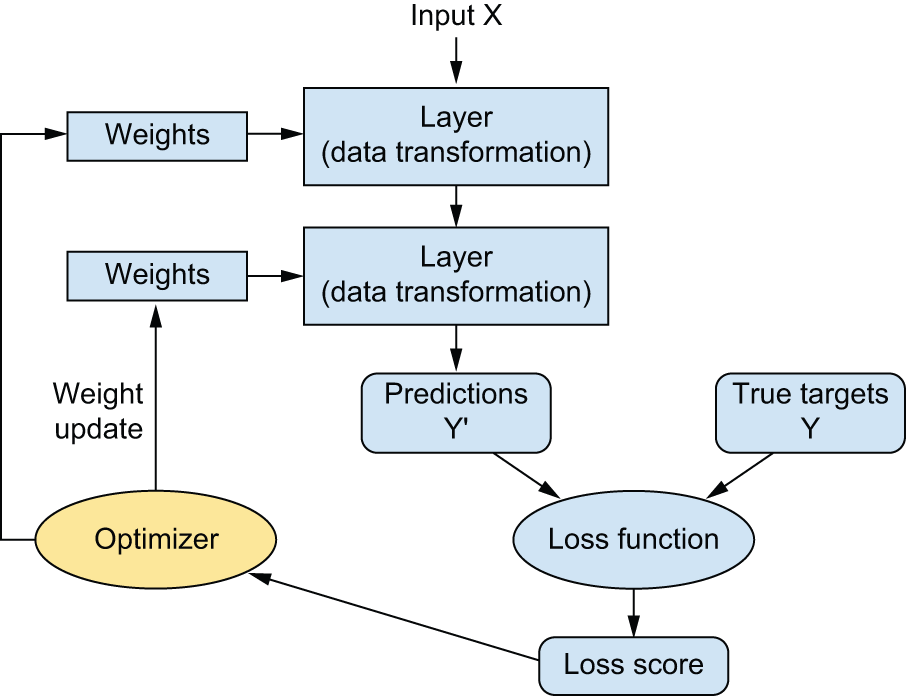

loss function是找到预测结果和真实结果之间的距离分数。

深度学习的基本策略就是,根据上面的分数作为一个反馈信号,来调整一点weights,朝着损失分数更小的方向。

这个调整工作的是:optimizer

实现的算法是:Backpropagation algorithm 大名鼎鼎的BP算法,反向传播算法。

初始的时候,weights是随机选择的。

根据训练样本,经过多轮training loop,找到weights使损失函数最小。

The age of generative AI

生成式AI的target来自input data本身,这也叫做 self-supervised learning 自监督学习

生成式AI直到 2022 年才进入主流视野。